That means that during a reaction in which there is a change in the number of molecules of gas present, entropy will change. Gases have higher entropies than solids or liquids because of their disordered movement. These examples are programmatically compiled from various online sources to illustrate current usage of the word 'entropy.' Any opinions expressed in the examples do not represent those of Merriam-Webster or its editors. Entropy changes in reactions involving at least some gas molecules. Sebastian Smee, Washington Post, After seven years in the Hammerskins, exhaustion and entropy were setting in. 2022 Succumbing to something closer to entropy than evolution, the watercolor starts to puddle and bruise.

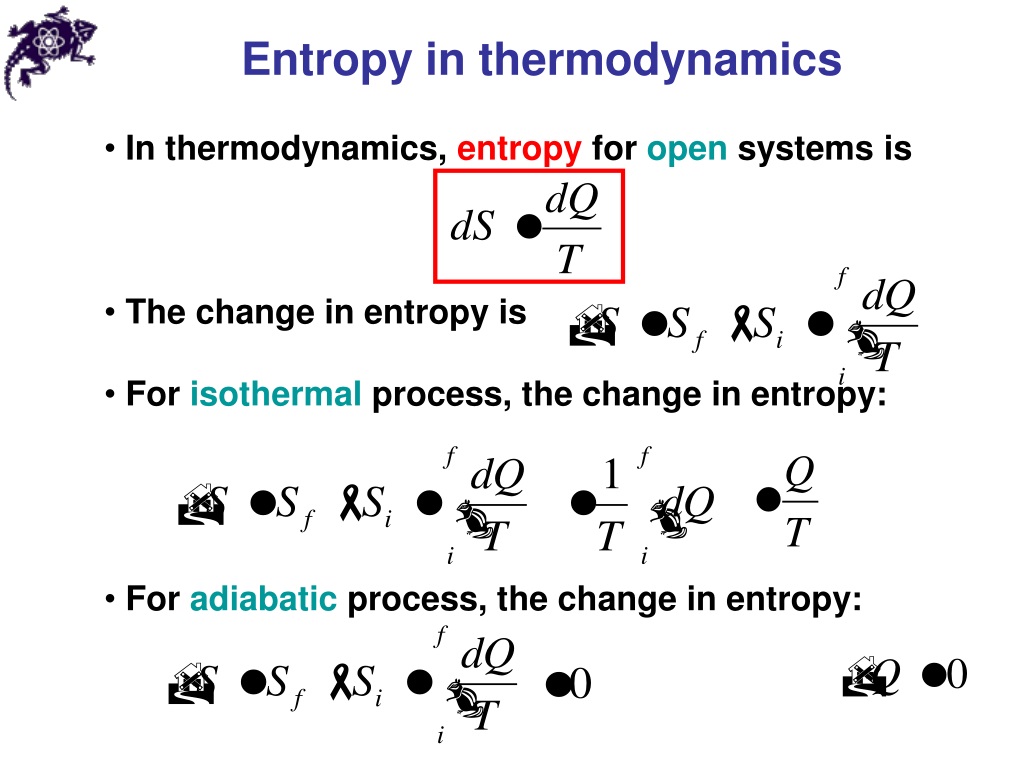

Ahmed Almheiri, Scientific American, 17 Aug. 2021 This is the island formula for the entanglement entropy of the Hawking radiation. Conor Feehly, Discover Magazine, 3 Nov. James Riordon, Scientific American, In short, the tendency for systems to move from low entropy to high entropy, the particular spacetime conditions of our solar system and the indeterminacy of the future combine to create our particular conception of time. For a spontaneous process, entropy change for the system and the surrounding must be greater than zero, that is. Although all forms of energy can be used to do work, it is not possible to use the entire available energy for work. The general expression for entropy change can be given by: S q r e v T. The more disordered a system and higher the entropy, the less of a systems energy is available to do work. Entropy also describes how much energy is not available to do work. James Riordon, Scientific American, The expansion allows the universe to smooth out, dissipating the entropy before collapsing again. Entropy is a measure of the disorder of a system. So, the entropy of a fair coin is: Source: Author. In the case of a coin, we have heads (1) or tails (0). The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the microscopic description of nature in statistical. Here, c is the number of different classes you have. Entropy is a scientific concept, as well as a measurable physical property, that is most commonly associated with a state of disorder, randomness, or uncertainty.

It takes only a little bit of energy to pick up one sock. Entropy is a state function that is often erroneously referred to as the state of disorder of a system. If a system loses too much energy, it will disintegrate into chaos. Quanta Magazine, That flaw is entropy, which builds up as a universe bounces. The mathematical formula of Shannon’s entropy is: Source: Author. Entropy is a measure of how much energy is lost in a system. The higher the entropy of an object, the more uncertain we are about the states. In this sense, entropy is a measure of uncertainty or randomness. Thus, entropy has the units of energy unit per Kelvin, J K -1. Entropy is the amount of energy transferred divided by the temperature at which the process takes place. Entropy is also a measure of the number of possible arrangements the atoms in a system can have. When a system receives an amount of energy q at a constant temperature, T, the entropy increase D S is defined by the following equation. 2022 If the entropy of the system decreases, the entropy of the environment must increase such that the sum of the two entropies can only increase or stay the same, but never decrease. The entropy of an object is a measure of the amount of energy which is unavailable to do work. Jennifer Ouellette, Ars Technica, 2 Dec. However, the entropic quantity we have defined is very useful in defining whether a given reaction will occur.Recent Examples on the Web Jacob Bekenstein realized in 1974 that black holes also have entropy. Therefore, the entropy of a pure crystalline substance at absolute zero is defined to be equal to zero. Entropy can have a positive or negative value. It is denoted by the letter S and has units of joules per kelvin. The value of entropy depends on the mass of a system. Standard Entropy All molecular motion ceases at absolute zero (0K). Entropy is a measure of the randomness or disorder of a system. It is evident from our experience that ice melts, iron rusts, and gases mix together. The change in energy content and the release of energy caused by steam condensing to liquid can help fill some of our growing energy needs. This apparent discrepancy in the entropy change between an irreversible and a reversible process becomes clear when considering the changes in entropy of the surrounding and system, as described in the second law of thermodynamics.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed